Preface

This blog post is a collecton of more technical chapters from my short book, Showdown: Virtual Reality Carnage. Published in the hope someone finds solutions or fresh ideas for their existing challenges.

Introduction

The intention of this book is to present the main lessons learned in VR over the course of the last few years. Some of these are indirectly related to VR, such as the significant performance requirements that in return invoke the creation of various efficient systems to cope with it. The basis upon which these techniques are built is a virtual reality game ShowdownVR that I’ve made. The information is relayed in a more personal, relaxed, story driven style as compared to a standard white paper, to hopefully entice the reader to read on and provide him with a more subjective take on various subjects, such as the apparent future of technological evolution and different gameplay elements that might prove useful to designers.

In March 2014, Epic Games released Unreal Engine 4; two short months later, I was all over its source code. An incredibly bold move by Epic and a clear shift in company’s direction. It’s hard to imagine the pressure everyone involved felt at the time of executing this plan, releasing Unreal’s code to the world, for free. I imagine it was scary as hell. But it was this move that sealed the deal for me to move over to VR game development full time. I’ve started my VR development in 2013 (Rift DK1) with a decade of programming experience behind me and, to no one’s surprise, done most of my work in OpenGL and DX. As so many programmers in this industry, I too have written various rendering demos, engines, raytracer for my own use, and occasionally slapped on a poor excuse for an editor and called it a game engine. But at the same time, being a somewhat disciplined perfectionist, I stayed aware that it’s really just a fun exercise, and that writing your own, one man production, quality engine is practically impossible these days. Fact is, Unreal has hundreds of man years invested in it, that alone is more that a human lifespan – and even if you were to write the whole game engine, you would end up with decades old technology. And so, over time you realize that there are really just two options: do your own thing, build a big team around it to maintain it and stay competitive, or join a company that is already doing it. I did none of the above, I bid my time until something else comes along. And it did, an open sourced game engine, an engine that is full steam in development, but it’s available to anyone to mess with. Unreal Engine 4 is the most powerful engine in the world, not necessarily because it has all the best solutions implemented; no, in fact for example at the moment, some realtime GI stuff from CryEngine is more advanced etc. It’s the most powerful because Epic pushes it forward vigorously, produces tools, fixes bugs and gives you the opportunity to be the chief technology officer of your branch/version of their engine. This, to me, is pure magic. It makes all the difference that some of us need to differentiate our work from others, total control over final product.

John Carmack and me at Oculus Connect 2 in Los Angeles, 2015.

I’ve always been inspired by John Carmack and id Software, and have crawled through their released engines and games to educate myself. And I have to thank John for always pushing for openness, as he said, “Programming is not a zerosum game. Teaching something to a fellow programmer doesn’t take it away from you. I’m happy to share what I can, because I’m in it for the love of programming.” And I couldn’t agree more.

It’s stunning to watch how Tim Sweeney is bringing the vision of unifying tools across the industries to life via open sourced, free game engine. Thank you, Tim and Epic. The exciting thing about this industry is that it’s always evolving, every single day things change and improve, so engines that don’t get vigorously maintained fall behind quickly. Bleeding edge tech is at most a few months old. After that, it inevitably gets replaced with fresher iterations of technology. And this is why I think the direction we’re moving towards is the right one, a more unified open source set of tools for crafting our virtual worlds.

Rendering

For the majority of the development, I knew the final hardware specifications that ShowdownVR was targeting on: GTX 970 and Intel i54590 level hardware or, and this is important, better. As a PC gamer and developer, I value the diversity of performance that we get by buying various high performance pieces of hardware, and as such, it was very important to include a set of options for people with the newest hardware to play with. The min. spec. hardware is pretty powerful when running games in normal modes in 60 Hz or even easier in 30 Hz (personally, I can’t stomach low framerates), but in VR, well, I invite you to go out and take a look at the games on the market. The majority of them, you will find, have simplistic mobile style graphics, with a few exceptions from a couple of AA and AAA developers. There is a objective reason behind this. 90 Hz stereo on ~3.5 TFLOPS GPU and 3.3 GHz 4 core/4 thread CPU is no picnic in a park, especially with all the overhead of modern PCs. Now let’s dive directly into this chapter and take a look at tricks, techniques and tools that compose the final visuals and overall feel of the world.

Renderer

A few words about the renderer and different options I considered. Unreal Engine 4 uses a deferred renderer for desktop platforms. There are a lot of reasons why some renderer design might be better suited for certain things, and we won’t go into details here. The main two reasons for looking into different renderer designs were lower overhead and easily applicable rendering techniques like MSAA. The Oculus Forward Renderer, released in May, was in the ballpark type of design I was looking for, and I had to give it a go. Showdown was never going to just open in a different Unreal version, so the first thing to do was to port the code over. After a few days, it was clear that there were still gaps to fill and bugs to fix, and that some of the lightning features missing from Oculus’ version may just prove to essential to the game’s design. At the end, I’ve decided that it was clearly not a net benefit for this project. Even if I could fix every visual bug, there was no way to redesign the already neat optimized systems built upon current renderer design. There is also a bigger issue. This is not a heavily maintained branch of the engine, so the new and the old bugs were going to need a lot of time to get fixed, as I understood very well that this was a version designed around Oculus’ game projects. I do support this effort from Oculus, and it’s going to get used by me for the most part to study and pick out a few features here and there. The clear conclusion on my part to keep using deferred renderer meant I was really going to have to continue stripping everything to the bone on my own, and a lot of this content relates to doing just that.

Instance Stereo

Unreal Engine shipped with instanced stereo in 4.11 and since then, it provides developers with the ability to drastically reduce draw calls, leaving CPU free to do other things. But to make all systems work with instancing, an additional rendering path had to be implemented. There is now a new rendering path called “Hybrid stereo” that combines the instanced approach with the more brute force, two passes approach (left, right eye). Unreal Engine, when running in instanced stereo, ideally only renders left eye and instances everything else. But not every rendering path can yet be instanced and instanced objects can’t be instanced again, so that’s why combining both approaches comes in handy sometimes. The good thing about this is that you can make third party libraries that don’t explicitly support instancing work efficiently with UE4 instanced stereo rendering system. It adds a “Hybrid stereo” check option for each component in the scene, so that you can manually select parts of the scene that you want to render for each eye.

Instanced stereo that Epic built in is awesome. But you do have to make it work with other things, like third party libraries I mentioned, which issue draw calls on their own. Unreal draws instanced stereo by calling DrawIndexedInstanced while on DirectX (10 or higher), DrawElementsInstanced if running on OpenGL (3.1 or higher), and if you can’t draw meshes/objects by calling that yourself, then you have to render in two passes; a nastier alternative approach would be to hook up to DX, intercept, modify and forward draw calls. Again, it proves very convenient to have access to engine’s source code.

Hybrid Stereo, each color is a one draw call per object/material it’s covering. Ocean gets a draw call per eye.

Occlusion

Since most static meshes are merged to reduce draw calls, there are not too many objects on the screen. Knowing that, it’s also a good idea to check how occlusion is calculated and subsequently objects culled. In UE4, HZB occlusion system is on by default, which should reduce GPU and CPU cost, produce a bit more conservative but less accurate results, ending up culling less objects. HZB should perform really well in scenes with a lot of objects, but since I went out of my way to reduce the number of objects on screen, this had to be evaluated. Below, you will find that we’re not using AO and SSR. Both of these features rely on HZB, but since we’re not using any of them, this technique ended up eating way too much GPU time to prove itself justified. Turning this off via “r.HZBOcclusion 0” command should save a lot of frame time (really depends on scene, in my case ~0.5 ms), but there’s a kind of bug or a catch in the engine that should get fixed. HZB is used by ambient occlusion, even if you toggle it off with “showflag.AmbientOcclusion 0”. This won’t stop HZB of getting built because at this moment, code relies on “r.AmbientOcclusionLevels” being equal to 0 in order to consider AO off. The problem is that AOL is set by default to –1 (meaning the engine decides on its own what AOLevel to use), so even if you have SSR off, HZBOcclusion off and AmbientOcclusion off (via showflag), there still won’t be any performance gain due to not building HZB. You must additionally set AmbientOcclusionLevels to zero. This gets a bit more confusing, given that the description of occlusion levels is “Defines how many mip levels are using during the ambient occlusion calculation”, because no matter how many mip levels should get used, there are none used if there is no AO on. So, yay, saved some more time!

Shadows, reflections, GI

From the beginning, it was clear that any idea of a fully dynamic world was going to have to exist without any real dynamic shadows and realtime GI. These are the performance killers that will have to be absent or substituted somehow. I ended substituting both at various levels.

Shadows are found in the form of blob shadows. If you look under your feet, you will find one; it helps a lot the illusions of presence. These are simple translucent, unlit spheres with sphere gradient and depth fade, not much more to it. It’s an old trick, much like capsule shadows in Unreal. There is a bit more to reflections. The final solution was dictated by more than one thing; for example, in general screen space effects like reflections produce unwanted effects (like side fade), which help break the immersion and are directly dependent upon player’s view. In this case, I was trying hard to push NVIDIA Multi-Res Shading to boost the game’s performance and give gamers something to tweak, test and configure. Unfortunately, the nature of this process, rendering at multiple resolutions can result in magnifying some of the screen space artifact when scaling, as well as adding additional ones like obvious hard lines between different resolutions where one part of the image gets up scaled to match its render target size. Before settling with the final solution, I’ve tried many possible approaches, and for the longest time I used something I called HDRIGI. I’ll describe what it is and how you can use it, because it’s pretty easy and it might be appropriate for something you, the reader, are making. (Or feel free to skip to final solution below.) This is not really a GI technique, but at the time, most of the environment was lit via this method and that’s what I referred to it internally. In reality, it used 56 static ambient cubemaps to lerp between them via two post process volumes, so the cubemaps would end lerping and switch every 25.7 minutes of game time. This technique has a couple of good and bad sides. The good are that cubemaps are static, and there is no hitching like when you try to capture one in realtime; it’s pretty cheap and easy to implement because it relies on features that are standard in the engine. The bad are that obviously, you have to use ambient cubemap, so that feature will cost you some performance, it’s likely you will end up relying on SSR, and of course, you will have to capture and recapture cubemaps every time you change environment. You can of course automate this last procedure, if you’re working with Unreal source code. In case you want to do it manually, this is how you do it. In your texture folder (for example), create new texture of type “Cube Render Target”, and then in the scene, create “Scene Capture Cube”. Now assign created render target texture to scene capture, then while playing, you can pause the game, right click on render target texture in your folder view and select “Create Static Texture”.

Final solution, reflections

The most efficient reflection solution that handled MultiRes and was without any SSR or other artifacts ended up being a conservative nonHDR 256 × 256, no alpha channel, fog, landscape, static meshes, sky light cubemap capture that is then sampled in reflective materials. So each shader ends up applying reflections separately, calculating reflection via “Reflection Vector” node (defined as “CameraVector + WorldNormal * dot(WorldNormal, CameraVector) * 2.0”) inputting camera vector and material surface normal. Unreal is physically based shader, so at this point, it’s on you to blend the reflections in materials as realistic as possible; most of the reflection and refraction constants can be found online, and of course as pretty much none of the real life materials are pure, there are always multiple constants to lerp in between. This eliminated the need for any SSR and ambient cubemap features, it saves a pretty significant chunk of frame time and looks better. This was especially important in ShowdownVR because of the enormous water surface that covers the world. All this setup is harder and requires more work, but the end result is pretty impressive!

Large wave, split into two opposite sky colors. See the color reflected from ocean surface.

It’s crucial that cubemap capture is conservative. In UE4, you will notice that scene capture, cube or 2D is triggered either every frame or when capture objects/components are moved. This, in our case, is unwanted behavior, because we need to capture environment from the player’s perspective, otherwise any relatively close-by object reflections are going to be wrong; technically, all reflections would be wrong, but it would be much less noticeable for distant landscapes per se. To turn auto recapture off, you will have to modify source code just a bit. Jump into “Source/Runtime/Engine/Private/Components/SceneCaptureComponent.cpp”, find a method

“USceneCaptureComponentCube::SendRenderTransform_Concurrent()” and comment out “UpdateContent();”. This is the piece of the code that gets called on transform change and automatically recaptures cubemap. From here on, I’ve conditioned the explicit cubemap capture with three rules. First, the capture can’t happen any more frequently than each 11th frame (I’ll explain the logic behind it later), but only if one of the following two conditions is met: player’s position is changed for more than a meter, or 21.6 minutes of game time elapsed between now and last capture (±0.03 of sun height). So the 11th frame is first and foremost to limit the maximum number of occurrences, the first thing most of you probably asked yourself is why the hell is this not limited by frame delta time. I’ve made the decision to limit some noncrucial things via integers, because this works as an automatic scaling system, like an additional cushion; the less frames your PC can process per second, the easier the load is going to get. (Feel free to skip to GI below, if you already know how it works.) Instead of depending on delta time and always trying to do all 8 captures, we’re keeping these heavy operations away from each other. If you can’t get your head around it yet, let me give you an example that demonstrates the difference. Let’s say we schedule operation every 33.33 ms (30 Hz); if we are running our program at 90 Hz, this means we’ll call this operation every 3rd frame, but imagine we drop down to 60 Hz – now we’re calling it every 2nd frame, and at 30 Hz, we’re calling it every frame. The end result is always 30 calls per second. But if we limit it by an absolute number of frames, let’s say we call our operation every 3rd frame, the same scenario looks like this: at 90 Hz = 30 calls, at 60 Hz = 20 calls, and at 30 Hz = 10 calls, adjusting the heavy load per hardware. It’s also very important to spread out the heavy operations over separate frame; for example, never call sky light capture and cubemap on the same frame.

Final solution, GI

Approximating one light bounce.

One of my closest friends is a masterful classical painter and over the course of the development cycle, we’ve discussed the visuals numerous times. This helped me learn more about how and why I want certain visual cues. It was crucial to bring out the details from the darker parts of the scenes, and increasing contrasts and gamma was not something I fancied, because it can quickly lead to unnatural, washed out look with almost everything in the gray area and no real black tones. Being physically correct (approximately) was what I strived for; any lightning or material art hacks should be left behind, so one of my wishes was to bring some kind of dynamic or semidynamic GI to the game. At first, I’ve played around with the idea of using VXGI as an option of higher spec. machines. The reason I was interested in using this technique was because of its wide configuration options for people to play with and the ability to represent multiple bounces in a fully dynamic world. At the end, I decided that the results – at least at this time – are not satisfactory on such wide open landscapes. Anything smaller and/or especially a bit more closed off looks magnificent, but that’s not the game I was making. So as mentioned above, I’ve used a number of static ambient cubemaps for some time to kind of (statically) work around this as well, until switching from SSR to manual dynamic reflections. If only there was a cheap way to do one light bounce ... Well, there is. The way I solved this problem was with the Sky Light. I ended up using “Sky Light” and schedule captures conservative with absolute spacing, much like reflections, except that these happen 90 frames apart and every 36 minutes of game time (±0.05 of sun height). The reason you can call this GI is because there’s a neat magic trick you can do with Sky Light object: if you uncheck “Lower Hemisphere Is Black”, you get an approximated one light bounce from your environment for free. This is exactly one of those magical solutions that work only in a specific type of game which uses certain rendering features – and everything in ShowdownVR checked out!

Ingame GI solution replacing SSS shading model.

Even more, because the majority of land is covered with ocean, it’s very important to keep ocean shader as cheap as possible, and doing one light bounce ended up replacing the previous SSS shading model, so that now I could get similar results with the default Lit shading model. Read more about it under rendering, water. Overall, this approach is a win-win situation.

Signed Distance Fields

Scene visualization of mesh distance fields.

The good old mesh distance fields! These are one of my favorite features. They are so universally useful that without them, you would literally need additional people to pull off the same results. If you don’t know what they are, well, basically it’s a buffer, much like a bitmap, only that it stores distances from mesh surfaces at every point. In Unreal, DF (distance fields) are stored as volume textures. They are useful for ambient occlusion, soft shadows, fonts, decals, collision, ray tracing, GI, and much more. Still, they are in general classified as a high end feature and on a tight budget, this will kill performance. In ShowdownVR, however, I wanted to use GDF (global distance field) for a few key things, mainly particle effects/GPU collision and all water-related effects like depth, shore, foam, buoyant and dynamic object entering, traveling and exiting the ocean surface etc.

First, the scene depth based GPU particle collision was killing the volcano lava particle effects, so what happened was that when you looked away, particles would stop colliding and fell straight through the rocks. Volcano shader lerps lava along its path, but I desperately wanted another layer on top of it to give it a more menacing, dynamic feel, so carefully designed GPU particles were the obvious solution. There is another potentially giant (depends on effect and shader design) performance gain, if you are using GDF as a collision option: emitter’s material doesn’t have to be translucent/additive/modulate in order to use collision! And just like that, it can eliminate one of the most common performance killers – particle overdraw. But if you ever used GDF collision, you will know that it works only until a certain distance; after that distance, there is again no collision. This is an artificial limit, set to limit the resource impact of GDF, the memory footprint and the maximum potential update area volume. In UE4, this can be adjusted via console command “r.AOInnerGlobalDFClipmapDistance”; increase it to extend the GDF from the camera position. Even with all this set, there is still a glaring problem of performance, so I had to figure out a way to make global distance field cheaper. For example, its cost would constantly go up to 2.5 ms per frame (22.5% of budget) on Titan X (Maxwell), and since Titan is about 2 × the performance of the targeted GTX 970, this would roughly result in about 45% of the targeted budget! The bottom line is that the cost was undeniably huge.

To optimize any feature of an engine like UE4 for your own needs, you have to understand that this is a general purpose engine. There is always a lot of things happening that might not be needed for your game, but are needed for an average game; engine programmers can’t know the specific requirements of every game out there. In moments like this, open source provides game makers with unlimited freedom. Global distance field is a collection of clipmaps that mainly hold the information about clipmap’s world space bounds that its volume texture holds data for and regions array inside the bounds that needs to be updated. There are two ways in which GDF updates its contents: partially by checking update regions array and updating these regions, or by full volume updates.

Random holes in ocean surface due to missed GDF updates.

When you generate initial volume textures, this data is available on the GPU, even if you don’t update it regularly. So technically, you could just generate them and then leave them alone; in a static world, that is. If the player or anything else is moving, you will get artifacts. If your shaders rely on GDF, then your world might look like Swiss cheese – full of holes!

What now? Well, this isn’t useful for anything but the most static scenes, so I tried explicit update control at various intervals – allowing volume updates only when partial update is needed and is right on interval. The nature of ShowdownVR is that you can travel pretty big distances very fast or do any number of quick movements, which can result in partial updates missing regions, if you’re doing explicit control. So this still leaves you with occasional missing parts of updated GDF.

Final GDF solution

Partially updating texture volumes at most every 3rd frame gives Showdown the ability to visualize complex dynamic mesh movements relatively smoothly at 30 Hz, issuing full volume update calls with the same player position and performance cushion conditions as reflections, checking every 11th frame and updating if player’s position changes for more than 1 meter. This finally ensures that any skipped region updates are redeemed. This design gives you explicit control over everything and the result is that worst case frame timings fall from ~2.5 ms to ~0.8 ms. Linearly projected to min. specs, this should result in about 14.4% of the entire budget, down from 45%. Now, this was something I could work with!

Using global distance field to visualize projectiles entering, traveling and exiting ocean surface.

Lights

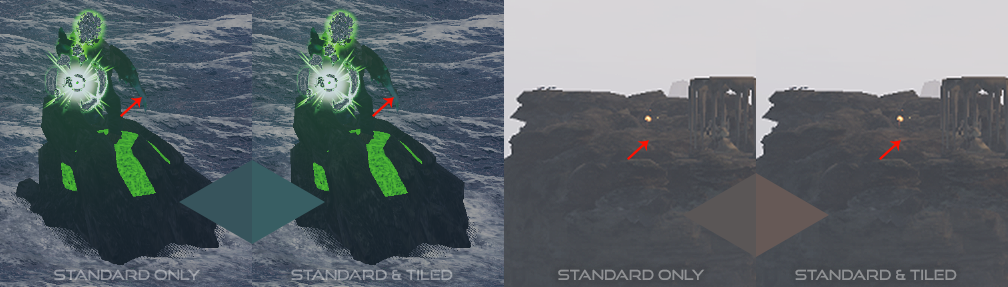

The way lights are rendered is an important performance factor. If there are many lights, tiled deferred shading should yield better performance results than standard deferred with no visual difference. This is because it has a fixed overhead due to tile setup, but aside from that, it scales better. The performance margin is significant enough that UE4 switches between the two techniques by checking how many light sources are effecting the screen and comparing that to the minimal light count required to switch from standard to tiled shading. By default, the engine switches to tiled at 80 lights. I would encourage every developer to measure how their game performs under both techniques or figure out when this switch can/will occur. There are a few caveats with this that you should be aware of. First, the conditions and behavior I listed above are not really the whole story. See, at the moment, the engine has no fallback for simple lights like GPU particle lights. So, if you are using any simple lights and are using the default settings (TiledDeferredShading = 1), Unreal will mix up both of these techniques in order to render out all lights; for example, directional light is always going to get rendered via standard shading, and simple lights are only supported on SM5 level hardware and via tiled shading. Right, so what does it mean, and why should you care? Showdown’s light rendering takes anywhere from about 1.0 ms to 3.0 ms on min. spec. hardware; this is not a lot and it’s thanks to only using standard deferred shading, which is thanks to not using any features that require tiled shading. These performance numbers were measured under light (aka. start of the game) and very heavy (aka. DOOM level difficulty) conditions. I’ve measured the same with mixed and made sure to measure the (mostly) tiled shading as well; as expected, it turned out that there were no measurable situations (that I could find) in the game, in which I benefited from tiled shading. It always resulted in overhead. This made sense, because I was avoiding lights like plague.

The heaviest scenes with tiled approach enabled took about 5.5 ms, and that resulted in much wider light processing range (~1.0 ms to ~5.5 ms). So, I turned off tiled deferred shading and my results were much better and more consistent. But there is a caveat to that, as well. Remember how I don’t use any real lights (except one directional) and GPU particle lights are only supported by tiled shading at the moment? So, why the difference in performance at all, why was tiled light shading even flipping on? I had to examine the code to figure out what was going on. It turned out that I had two lights in one instance of a particle effect (default UE4 fire I use in a torch); it’s a CPU based emitter, but ended up switching to tiled shading every time it was in scene. This was hard to track down because this effect is only used in one place and the visibility of this object, or should I say any contribution it has to the scene, was causing tiled lights to be used. I’ve noticed this first on very far away, wide shots of the landscape when profiling, because this was the only place tiled was consistently on. Of course, the reason for this was that somewhere in the distance, there was an emitter with lights that contributed 66 pixels (0,000008%) to the 4K image.

Simple lights not rendered in standard deferred.

If you’re asking yourself why does UE4 behave this way, the answer is that it’s a general purpose engine. If you want simple lights, then you must be prepared to pay the price for it. However, I will agree that there should be some kind of inengine warning that using any number of simple lights forces the overhead of tiled shading upon you, because there is no fallback at the moment. To control this behavior, use the following console commands. First, “r.TiledDeferredShading (range 0 1)” default is 1. To sum it up, this doesn’t mean the engine is using tiled shading; it means that it will use tiled deferred when needed (any num. of simple lights or more than min. count lights), while setting this to 0 will force standard deferred. This costs some features and it might result in horrible performance if you’re using a lof of lights, but in the right design (not using any simple lights and having overall low light count) will result in streamlining the code path and prevent any unexpected tiled overhead. Second, “r.TiledDeferredShading.MinimumCount (0 X)” default is 80. Use it to set the minimum light count at which to start using tiled deferred. This again showed the benefits of knowing your engine and squeezing the most out of it.

Stencil lights

One of the cool techniques I’ve used in a ShowdownVR test map is a combination of custom depth and custom stencil to create one thousands point lights with practically no performance impact. It’s important to note that these are not physically correct, there isn’t any light contribution to the geometry itself, and of course no shadows.

Using stencil lights to display many distant point lights.

If you tried to accomplish the same with normal point lights, you would encounter multiple problems. First, it would kill the performance; even if you tweak attenuation radius, there is no way you’re getting 1,000 lights on the screen, especially because there are so many other things going on. Even if you could, the lights would get culled at a certain distance. You can manually set light culling to a very low number, but you won’t get much better visual results, except the additional performance drop. All lights are drawn in a pre and post tone mapping phase, depending on where they are from the camera; it’s a similar system to that of GTA V, but they use tiles/images instead of a simple bright intensive spots. In any case, these most likely shouldn’t be used up close, especially in VR. With tiles, you would probably spot the 2D nature of the post-process light halo texture, but it is a perfect technique for rich distant vistas. The reason I call these stencil lights is because their color or other properties can be set via custom stencil buffer, so that makes them much more configurable than just using custom depth buffer. For example, these ships in reality usually have an additional white light at the back, green light on their right and red light on their left, which can be accomplished with stencil lights.

Water

The ocean, giant water landscape. I must admit that while starting this little chapter, I feel euphoric. For the last two years, I’ve been quite literally obsessed with the way water surfaces look, feel and behave. Because it is composed of many pieces, make sure you check the physics part bellow the rendering chapter.

It’s important to remember a few key game requirements. Most of the game world is covered with a large body of water that is visible to player at all times and has to fully support buoyancy. It’s also important to note that most of the water will be quite deep, because we’re in the middle of the ocean with a few islands and rocks popping out. And last but not least, it has to support a wide range of wave heights. This pretty much eliminates straightforward ways of creating a static water height and adding on visual details in pixel shader because of its static water level. Also, any real physical simulation options, such as using small spheres with special physical properties and interpolating water body along those properties, are not an option because of the very high resource demands per volume. So, there had to be another efficient way to convincingly model the ocean.

If you researched this area, you will know that there are certain established methods for dealing with such problems. Because we desire our waves to be as realistic as possible, we know that sine function just won’t do. Any wave generated by it would exhibit a nice round shape, something you might observe on a calm lake, but definitely not something a wild sea storm produces. There are many sine function modifications that can help produce a more realistic picture, but they are inherently more artist than physically based. The function to use is a physically based one, known as Gerstner Waves; a much better approximation with its sharper peaks and broader troughs as well as detailed crest, because the vertices get pushed towards each crest enabling a higher geometric accuracy where it’s most needed. It also models some surface motion subtleties. Because the dynamic waves themselves are the main requirement, this was the most important criteria and as such a good place to start searching for available solutions. Because I was working with VXGI, real-time GI solution from NVIDIA, I’ve noticed that their GitHub contains WaveWorks Unreal Engine 4 branch. This immediately sparked large amount of interest in me. At the time, I didn’t suspect that it’s going to take about one year and a half to organically evolve the system to the point where it’s very efficient, rich in features, highly configurable, looks good and works in VR. Along the way, I regularly tried alternative solutions, community projects and anything that showed potential. Today, I can finally say without a shadow of a doubt that as a sub system on which to build upon, WaveWorks has no competition.

Shading

Water shader is quite efficient and it features multiple levels of foam on waves, contact foam, shallow water foam and subsurface color simulation, full reflections, specular gradient, flat tessellation, tessellation displacements when running Shader Model 5, and world position offset on SM4. Entire water shader consists of 278 base pass and 39 vertex instructions along with 9 texture samplers, including landscape height map (to differentiate from mesh distance fields), environment cubemap, 3 foam textures with panners and an ocean stamp texture. It’s using default Lit shading model and Masked blend mode with dither mask to compensate for translucency. The normal map, foam that comes packed in 3 channels, and displacements are all generated by WaveWorks node; more on that below.

Ocean shader, reading WaveWorks vertex displacements.

NVIDIA WaveWorks

As much controversy as it was around NVIDIA’s GameWorks, I was shocked that no one, including me, ever pointed out that NVIDIA supports a GPU agnostic rendering path. It’s a CPU simulation model and it works wonderfully! On any GPU that runs ShowdownVR, there is the same underlying system, therefore providing the same gameplay conditions. There are three built-in quality settings, normal, high and extreme, the first one should be used to set up the CPU simulation model when needed while the other two options send workload to the GPU and are of increasing level of detail; at present only supported on NVIDIA GPUs.

Normal simulation quality (left) vs. extreme (right). Tessellation set by application.

WaveWorks is responsible for generating normal, displacement and foam output. Foam is a three channel gradient; each channel is focused on a specific part of wave progression, generally in height where the top crest produces foam with greater intensity than lower, wider parts of the wave. Normal is the one that out of the box provides nice details when litting the surface and displacements combined with tessellation generate very nice dynamic geometry. Displacement readback has to be explicitly turned on and points requested via GFSDK_WaveWorks_Simulation_GetDisplacements. This function is good to note, because it can get quite expensive, so be very conservative with the number of points/locations you’re requesting; don’t go sampling thousands of them. The mesh upon which the ocean gets shaded is draw in a form of quad-tree via WaveWorks C++ API, a great feature that enables geometry LODing and infinite ocean vistas by generating a specified number of levels and quad sizes around the player. Underlying API also exposes a few useful simulation input arguments, such as: wave amplitude, wind direction and speed, simulation wind dependency, wave choppiness scale, small wave fraction, time scale, foam generation threshold, foam generation amount, foam dissipation speed and foam falloff speed. ShowdownVR uses Beaufort scale, a more realistic preset option that operates on the above variables, to simulate real life ocean wave generation. An efficient, highly configurable and extendable system.

Quad-tree used for frustum culling and mesh LOD and overview of a simulation pipeline.

Anti-aliasing

Being extremely picky about pixel quality and preserving the neatly shaded results made my choice of AA very hard, especially because in VR, sharpness is king. It’s more personal for me, because I do have problems with perceiving 3D in real life; my right eye has vertical astigmatism with some refraction error, so things tend to be more blurry. This makes my perception of depth without any correction (glasses) a bit wacky. But on the other side, it makes me quite susceptible to any depth problems in virtual environments, which comes in handy. For a long time, there was no antialiasing in this game. This was a decision I made after meticulously comparing all possible results and deciding that the world pops out significally better in stereo without any AA. I knew this was somewhat controversial decision for a VR game, but I stood by it; any configuration of FXAA or TXAA hurts the crisp visuals just too damn much. Other techniques, such as MSAA, could probably do it justice, but are notoriously incompatible with deferred renderers. However, I did spend a couple of days implementing all the features that Showdown needs in Oculus’ Forward Renderer, which by its nature works really well with MSAA. (You can read more about that under Renderer.)

AA perception and problems

Here’s something interesting I’ve discovered: some people who are more perceptive to depth may lose stereo vision and complain about focus. This has happened to me personally and I’ve noticed that some players were absolutely unable to set the focus right in some games. At the time, it really puzzled me, but now I believe that some of those cases were probably related to the AA used in those games. My advice is to be extremely careful when doing anti-aliasing, you might just anti-immerse your players. Of course, the image shimmering and aliasing still distracts and/or annoys some players, so maybe putting in an option in settings isn’t a bad idea. Anyhow, the two above mentioned techniques in Unreal have their own problems in VR.

There are noticeable artifacts when rotating your head with TXAA turned on, because it works in a temporal domain, using previous frames to improve the present one. This fact makes it also very much incompatible with DMRS (Dynamic Multi-Res Shading), because the splits between different resolutions can change from frame to frame, making one part lower resolution and smoother/blurrier than the other. The end result is a very noticeable swimming of the image. Not only is it distracting, it also breaks your stereo vision. Overall, this is a great method that works neatly on monitor games, but just happens to fail here. FXAA has other problems. On the good side, it’s pretty fast, highly configurable and finds most edges. On the dark side, (it is your father …) it lacks the input information to produce better results. From here on, I’ll refer to FXAA and similar algorithms when comparing.

The biggest problem and advantage with these post effect approaches is that they tend to work on analyzing the image, finding the edges and then blending the sharp lines/edges. This is bad, because there will inevitably be some in-surface aliasing that gets smoothed over, and you can say goodbye to really sharp textures in the final image. On the other side, it’s good because it’s pretty cheap and fast. What I wanted to accomplish was to preserve the sharpness of some objects, especially surfaces with high specular highlights, but also eliminate the cross material/object aliasing. And it had to be fast, without any additional buffers and with a small set of instructions. There were about 10 different techniques that came out of different approaches.

Final AA solution

Through testing, tweaking and a lot of Photoshop overlaying, I really came to like how FXAA works and some results that it produces. Most edges rendered are eliminated, but unfortunately, so are the sexy specular highlights and contrasts that I want to have. To solve this problem, algorithm follows these next steps; they are in a different order than the image (simplified) provided bellow, for optimization reasons. First, the algorithm was supplied with an additional input of specular GBuffer. From this I could create a specular mask for materials that are preferred aliased over anti-aliased, for example the non-foamy part of water is one such place. Specular is not the same over the ocean surface, it rather depends on its state. The generated mask was then used to determine if current texel should be considered for AA, or we’re about to early exit. In case it is, a pretty simple local contrast filter is applied, using luminance and color differences to filter out edges. I’ve added some color bias to the filter, which keeps mild contrasts on blue (sea base color) and intensive reds (sun and volcano reflections in the water). This bias was hand tweaked over the day/night cycle to make sure it’s a general net benefit over the whole game. Only then we know if the texel in question needs to be anti-aliased, and a low quality FXAA pass is applied. After that, there is an additional/optional step that adjusts the contribution of FXAA; this, besides the edge filter and FXAA input parameters, is very useful for tweaking sharpness and could even be exposed to the user. The resulting image is sharper in general, and a few places we prefer nice and sharp were left untouched; this in return also speeds up the algorithms speed, because we have two early exits. To do the described or something similar in code, you have to make sure that GBuffers are not released before AA, by adding a reference count and removing it after the pass processing is done. Otherwise, they’ll be released after the post processing stage, before AA process stage.

General steps used in BXAA; bias approximate anti-aliasing.

Render targets and viewports

When the modern VR era (for the lack of a better word) started in 2013 with Oculus DK1 shipping, most of us were rendering at native HMD resolution, and pretty much everyone was still using simple barrel distortion to compensate for the lens distortion. Most lenses are custom made nowadays, therefore they require custom shaders to pre-process the image for lenses. All this leads us to the inevitable fact that rendering distorted images requires us to render at higher resolutions in order to achieve true 1 : 1 pixel ration (render target pixel : physical screen pixel) at the center of each lens. Depending on the device in question, this generally means we’re rendering at about 135% of the original display resolution; however, it doesn’t mean that we’re scaling pixel count by a factor of 1.35, because when the scaling is applied to both horizontal and vertical axis, the pixel factor is 1.8225. For example, instead of shading 2.592.000 pixels, you now have to shade 4.723.920 pixels. In order to handle these relatively high pixel counts, ShowdownVR uses three techniques.

Screen percentage

A popular way of adjusting the performance, which also doubles as a finely adjustable SSAA (super sampling). It really is a preferred way to AA the renderer image if the performance is viable. In VR, this is measured as PD (pixel density) for the ease of use. What it really means is that a PD of 1.0 is about 135% SP. For example, in ShowdownVR a GTX 980Ti level GPU should hit 90 Hz at 1.3 PD, and its range can be adjusted from 0.3 to 3. In the game itself, this is shown as screen percentage because it’s easier for people to understand. In reality, an SP of 100% is really an SP of 135% as perceived on a monitor, and an SP of 130% (1.3 PD) is really about 165% SP in the game. In post-processing be sure to check out scaling quality, as it can help you get more desirable result when deliberately combined with SP/PD values. Some SDKs will also provide you with a built-in adaptive resolution scaling. For example, Oculus’ implementation starts to scale the image down when GPU utilization reaches 85%; this adjustment happens at most every two seconds to eliminate any perceivable visual artifacts. This depends on configuration and current implementation, but that’s the general idea. There is, however, a better, much superior way of dealing with these problems – read on.

NVIDIA Multi-Res Shading

Foveated rendering has been a long time coming and NVIDIA already produced a couple of production ready versions of this tech. This essentially means rendering at high resolution at the focal point and drastically reducing rendering resolution radially around that point. This might not be the best solution for monitor games, because without eye tracking to determine the focal point, players are free to look at any part of the image, even the low resolution one. Although it should be pointed out that for a certain type of monitor games, with camera focused on the central screen area, it should prove less noticeable. On the other hand, this works absolutely magnificent in VR. Because of the lens distortion the overall resolution difference can be in the range of 30–40%, a significant percentage, yet remains unperceivable! Note that because of multiple versions NVIDIA develops, some of which are GPU architecture dependent, I’ll be talking about ShowdownVR specific implementation, meaning that the basis for development was Unreal Engine 4, Multi-Res Shading branch of technology.

Multi-Res Shading configuration options in the game.

Multi-Res Shading by default works by efficiently splitting (more on that bellow) the image into 9 viewports, with 3 in a row. Let’s call these quads. The middle quad is the one with the highest resolution and therefore visual fidelity. The 8 side ones are of a low resolution and literally form a border around high resolution area. Each corner border quad is adjusted via 4 variables; horizontal/X split, vertical/Y split, horizontal/X pixel scale and vertical/Y pixel scale. The split variables specify the width and height quad percentage from outer image edges; since these are corner quads, each has two outer edges. There are 4 border quads that get adjusted according to the corner ones; top middle, left middle, right middle and bottom middle. None of these four variables have to technically be uniform, but they commonly are for three reasons: much easier to configure and understand for users (check the image above), we usually want uniform resolution, as well as uniform split scaling at least at their respected axis and sides (if scaling X, then scale both top and bottom split on left side). But there is nothing preventing you from scaling X resolution to 20% and setting Y resolution to 66% or any other number; the effect of this will be a stretched image.

Viewport multi-casting

This is probably the most important part of the whole process. To efficiently accomplish rendering at multiple resolutions, there has to be a way to split the image into X number of quads (viewports). Above, I’ve stated that by “default” Multi-Res uses 9 viewports to form a high fidelity central area and a low resolution border area. In reality, there is no fixed number of viewports that it supports, it could’ve just as easily be 25 viewports; for some performance cost. The efficiency comes from the stage after vertex shader, where in our case NVIDIA’s fast geometry shader is used to calculate a conservative viewport mask from three vertex positions in clip space. Using this mask, a primitive is emitted/directed to appropriate viewport (culling it from others) or any specified subset of them including none, effectively culling it entirely. It’s important to note that using more viewports increases the chances of duplicating primitives, because the overlap between geometry over multiple viewports is more likely, resulting in some overhead. Which is why there is a minuet, scene dependent, but measurable performance decrease when comparing Multi-Res rendering without any pixel density downscaling to a normally rendered image. To avoid that, one simply has to use the technique for what is was meant to mitigate – large pixel count, by decreasing pixel density to an amount that starts to exhibit net positive performance measurements. On the fragment shader side, every feature has to be addressed to produce a correct final composition, from point light to bloom. One of the most common fragment functions converts render target UV from linear space to Multi-Res space and inverse, this insures we’re working on a correct fragment.

Final image composed out of 9 viewports, crisp image in center vs. very low pixel density border.

Dynamic Multi-Res Shading

I soon discovered that the nature of how this technique works makes it very suitable for adaptive resolution scaling. But there are a few key things that you have to be aware of. Changing viewport sizes every frame will kill your framerate, so it has to be spaced over time. I’ve used the same absolute performance cushion as mentioned in reflections, scheduling updates 9 frames apart. To really benefit from this, the scaling of center and border regions has to be non-uniform. We want the outer regions to scale faster and only when truly necessary, the scaling of center viewport should occur. This bias, of course, has to be applied both ways, therefore center quad density has to recover faster than border area. The scaling curve for two regions has to take into account a threshold of margin between the two density pixel factors. Failing to do so will result in breaking stereo vision, because the density discrepancies become too big. For all of this to work, it’s crucial to measure computers performance accurately and determine when any scaling is necessary, smartly accounting for GPU and CPU bottlenecks. There is no need for down scaling if you’re CPU bound; always keep image as crisp and clear as possible, better to upscale and match CPUs frame time.

Post-processing

It’s always very important to figure out what kind of post-processing you really need and why. I strived to achieve a nice clean look, so as to use as little effects as possible for immersiveness and performance’s sake. I wanted to use a bit of bloom at its lowest quality to indicate the projectiles close by and highlight the volcano lava parts. Personally, I don’t like too much or any eye adaptation in VR games; the eye should adapt itself to increased brightness because HMD is the only source of light that your eyes see, even if of course isn’t nearly as intensive as in real life. So, eye adaptation is off. Then, a few no brainers: dynamic shadows are off (read on for a detailed explanation below), any build in GI is off, depth of field is off, motion blur is off, lens flares are off because you’re not looking through a camera anymore, and ambient occlusion is off as well, as the performance impact is absolutely not worth it; we're talking any type of AO, even DF.

Light shafts, bad for Showdown’s gameplay and performance.

In some screenshots, you might notice light shafts; I use these for a certain type of promo shots in nonVR mode only, for example when facing the sun on a wide landscape shot. There are two reasons for not using them in-game. First, they are a nightmare in Showdown, they mock the screen up, distract and block player’s view; they’re just bad for gameplay, because the plasma projectiles flying towards the player are very bright and usually numerous, and the environment is darker. Second, because they kill performance, usually about 2 ms on min. spec., so that’s a nono, especially in this game.

If you have read about reflections in one of the previous chapters, you know SSR and ambient cubemap are off, because that’s handled manually. Here’s an interesting thing about dynamic shadows: shadow quality is set to 3 and not to 0, because distance fields only get generated at this or higher setting, and the dynamic shadows are not turned off by the console command, but via direct light not casting any; this is very important for a successful DF generation.

I also wanted to do a bit of color grading at night, so that you can feel the darkness a bit more, but still see everything. Also a very neat post design was that I strive for overall darker scene colors, because it reduces the screendoor effect even more. That’s also one of the reasons the game starts at mid-day and quickly transitions to evening and night – to ease in players. Once they are comfortable and fully engaged into gameplay, most people totally forget about things like screendoor effect, so when players find themselves at dawn or mid-day the next day, the battle is already in full swing! Another thing that I’ve paid a lot of attention to was the contrast. As mentioned in chapter Designing a world, there are very little abrupt contrasts especially of thin objects; this is to naturally reduce aliasing and very important because there, I wanted to do as little anti-aliasing as possible. The world pops out better in stereo when the image is nice and sharp.

Along this path, there were a few neat discoveries. Since we’re almost never rendering at default screen percentage, we can use this to our advantage and reduce aliasing. Using low quality (0) down sampling (practically unnoticeable) and high quality up sampling (3 – directional blur with unsharp mask up sample) definitely produces the best kind of image quality; everything blends very nicely, but keeps its sharpness and contrast. While using the lowest quality, up sampling will produce a much higher frequency result, which may be desirable for various reasons, but obviously produces a lot of aliasing.

Up sampling quality from lowest to highest (0–3).

So it really mostly depends at what screen percentage the scene gets rendered, and it may actually prove very effective to do low quality sampling across the board. If you’re rendering at full screen percentage (100%), anything over or under should probably do higher than 0 quality up sampling. You must remember that this setting refers to all up/down sampling, and most effects are processed at much lower resolutions taking as an input half res. scene color and at the end up sampled, so changing this has an impact on performance and overall look of the final composition. Sampling quality can be changed with console commands “r.Upscale.Quality” (range 0–3) and “r.Downsample.Quality” (range 0–1). At this time, down sample quality is clamped into this range, although the default value for it is 3, which I suspect is to be consistent with default upscale quality setting of 3; the state of this should be checked with every new UE4 release, since there may be additional down sample quality levels added in the future. There’s still another factor that’s exclusive to VR. It’s an easily missed fact, but if you compare the rendering output on a monitor and in VR, you’ll notice that the images do not match – pixels tend to go through usually at least one additional processing step to compensate for HMD optics. This step tends to produce a smoother image, so inputting a sharper image can produce results that match better with monitor. Therefore, check your results both way, something that’s too sharp on monitor may be perfect in your HMD.

Color formats used in Unreal Engine 4.

Above, you can see scene color format comparison. Showdown uses FloatR11G11B10 (2). I’ve measured about 4% performance improvement over the default (4) and it loses just barely noticeable amount of range.

Physics

This is naturally a very important part of the whole world, and as such can absolutely eat up any available CPU power. Sometimes, there are more than 100 intelligent moving objects that all use physics visible in the scene, all running at 90 Hz, and a lot of them use sub-stepping. Physics scene in Unreal is composed, if enabled, of two parts: asynchronous and synchronous. These have different properties, and a dynamic object can by default only interact with the fellow scene objects, therefore not detecting their parallel brothers. Generally, it’s a good idea to put as many objects as possible into the asynchronous scene, obviously because it’s non-blocking; it can run over a number of frames, threaded. This is suitable for objects that don’t need a collision detection that runs totally in sync with what’s displayed on the screen, like debris, explosion effects etc. Every buoyancy object in ShowdownVR finds itself in async scene, and every projectile flies through the synchronous one to precisely detect any collisions.

Buoyancy

The whole system is built upon NVIDIA’s WaveWorks. Under the hood, there is a five part system. At the bottom, we have WaveWorks with displacement readback running on DirectX, with exposed functions for wind direction, Beaufort scale etc. On top of that, we have a multipart ocean manager system. Its main role is to control the parameters passed to WaveWorks and, even more importantly, to efficiently calculate the ocean surface from a certain point. Remember, readback is a vertex displacement from a certain point on ocean surface, so in order to do a ray cast, you have to iteratively search for your point on the surface. Here we have a few optimizations, aside from the standard things like square vector size when comparing.

Ocean actor exposed properties.

Ocean actor is the top level blueprint derived from Ocean class at the C++ side. It takes in the arguments for adjusting the underlying algorithms. Among other things, it also exposes the Displacement Solver LOD system. There you can specify how accurately you want to search for your surface points. You do that by setting your search area. First, you can select step number (Samples Rows) and step size (Sample Quad Size), then you can select at what distance/error margin (Stop Search at Dist) you’re happy with your search, so that when accurate enough results are found early on, it can stop searching deeper. On the C++ side, you can also specify the multiplier for all these settings relative to the Beaufort scale; it comes in handy, if you want to really tweak the performance. A neat little detail about the displacement solver LOD system is that the LOD levels are fully dynamic. In ShowdownVR, this is used on the lowest LOD level (element 0 in the picture above), the level of quality it provides is only really needed for one thing, accurately detecting ocean’s surface at players eye level. Because the displacements get larger with increased wind vector magnitude, the LOD0 gets adjusted according to wind dependent Beaufort scale; changing search area size and sample count with it. Keep in mind that the main goal of this system is to be as dynamic, scalable and accurate as possible, so that you can have any wave size you want. Another optimization on the C++ side is that we take the wind vector into account when searching for our world ocean surface location. Instead of just uniformly looking around the specific point, we can now predict where the displacements are larger and then scale/stretch search area under the hood to find the results faster. The points that ocean manager reads are simple actors (of class FloatingActor) by themselves that track a couple of things, most notably their size, local relative location, absolute world location, along with current wave height with absolute world location, and are stored in a static list that gets processed by the ocean manager, all in one batch. At the top, there is a three part system.

BuoyancyMaster component is placed on all buoyant objects.

The buoyancy master collects and tracks points on a specific object, and then calculates and applies the buoyant force. Then, we have the general buoyancy object/point for reading and applying force (which buoyancy master tracks and ocean manager reads and writes to), and the additional gravity alpha variable (Gravity Multiplayer) that gets modified by the math model of the ship. The equation that does this takes inputs from X points (from the buoyant object) and outputs the gravity alpha that gets processed in the next buoyancy tick. The output of this equation is based on angles that get calculated from the X points, so in reality, it predicts what is happening to the ship based on its rotation, and then that gets applied to the buoyancy.

Let’s look at the image above. On the left, there is a simple overview of components contained on this actor (ship blueprint); it contains child actors of type FloatingActor that are derived from C++ class WaterHeightSamplePoint and hold all basic information about the specific point as explained above. In the middle, we have our buoyant object with 6 cube outlines around it (FloatingActors). The most interesting aspect of this image is the right side, where you can see the exposed properties. Some of them, like MyMass, Buoyancy Target and Water Height Sample Points, get populated automatically at initialization of this actor. Others are there for us to play with. From top to bottom, we have Offset Water Surface Z, this comes in handy when we want to do just that, for example, control the sinking of an object. Secondly, Update Interval is a crucial property, which directly impacts how many times per second we want to calculate our buoyancy force. On this specific actor, it’s set to 0.033/30 Hz, but it also self-adjusts to 0.011/90 Hz when the player teleports to the ship. Why? Well, to cause sea sickness, of course! Actually, to be more immersive; this might actually be a problem for people who experience such sickness easily. Luckily, being on a ship isn’t mandatory – not a coincidence.

Miscellaneous systems

Loading system

This system might be somewhat unorthodox, but produces very cool results. Technically, it’s composed of multiple pieces, but the end result is that loading happens only when first entering the game, asynchronously during rotation reset and loading/tip screen. After that, every object is recycled, eliminating the need to destroy and load the same object again. Essentially, the world automatically assembles itself back to initial state, recreating only the few destroyed object, making jumping in and from game instantaneous.

LOD systems

Pretty much every objects has some sort of a LOD system to conserve resources. This goes for meshes, textures and actor ticking. For example, projectiles tick at different rates depending on their position relative to the player, reaching 90 Hz when the object is close enough to the player to matter. Most of the distant objects are put into async physics scene and tick at lower rate, and others are view dependent. Enemy’s movements are also limited to a lower tick rate that depends on their location relative to the player. For the most part, all of this saves huge amounts of CPU power.

Open source

At pretty much every expo that ShowdownVR was shown, there were problems even minutes into the show – quite standard, I guess. But the only reason why the majority of those problems got solved in time was that Unreal Engine 4 is open source. If you can’t debug the majority of your product’s code (which in every non-AAA game is the engine itself), then how the hell could you make a reliable product? Engines are very complex, contain thousands of bugs and hundreds of undocumented features. The latter can help you improve or are unintentionally already degrading your game, so finding and using them in your advantage is only possible with open access to the source code with full ability (license or otherwise) to modify and ship it. I sincerely hope that by now, it’s crystal clear why open source engines are the only way to go in the future. ShowdownVR and everything around it would never even had a chance to exist, if Unreal Engine 4 wouldn’t be open source.

And the next time someone writes you about doing an exclusive demo of your VR showcase for president, don’t make him make his own, or … Thank you, Epic.

Special thanks

These people supported and helped me over the course of my career; without them, I wouldn’t be where I’m at today. Thank you.

Degen Vesna, mom

Špela Vrbovšek, teammate / girlfriend

Andraž Logar, mentor / CEO at Third Frame Studios (3fs)

Peter Kuralt, teammate at Frontseat Studio

Luka Perčič, teammate / CEO at ZeroPass

Matt Rusiniak, technology evangelist and totally rad dude at NVIDIA

Callum Underwood, developer support at Oculus

Eva Mastnak, teammate / photographer

Nik Anikis Skušek, master painter

JOKER CREAM TEAM™, authors of the best damn gaming magazine on the planet – Joker